I wrote the following update for my Principles of Macroeconomics students and thought it might just count as an update for Wats On My Mind.

In the first two minutes of class, I asked you how you would know how the economy is doing. Let’s focus on our three big areas: GDP, unemployment, and inflation.

Initial estimates are that GDP decreased by 4.8% in the first quarter (Jan-Mar). Let me comment on that a bit:

- That number is almost certainly inaccurate. It will be revised 3 months from now, 6 months from now, and be finalized 9 months from now. That is totally normal – as more and more data rolls in, our estimates get better. My bet is that the number is worse than that because closed firms won’t be reporting anything yet.

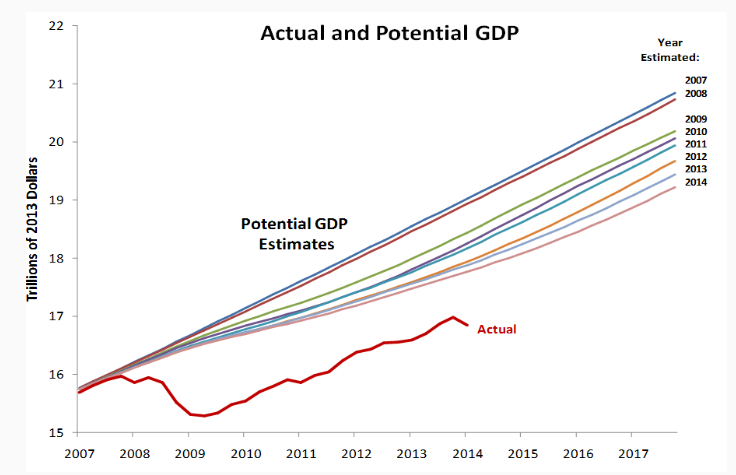

- The number for the second quarter will certainly be worse than that. We were only closed for 2-3 weeks in March, so the fact that we’re done that much in such a short window is a bad sign. We have already been closed longer in this quarter and the careful, measured opening we’re doing right now – which I think is wise to prevent a new spike of cases – won’t make for an instantaneous rebound.

- This is as bad as we saw during the Great Recession, but faster. Again, my hope and expectation is that our recovery will also be faster.

- GDP dropped in the EU by 14%. So it could be a lot worse!

The Bureau of Labor Statistics also released new numbers. So far 30 million Americans have filed for unemployment. That is roughly 18-19% of the workforce. This is officially, as expected, the highest unemployment rate since the Great Depression in the 1930s. The good news is that the number of new applicants has been going down each week, from 6.8 million at the end of March to “only” 3.8 million last week. (Recall: That’s still 4x larger than the previous high set in the 1980s.) The other bit of good news is that 90% of unemployed workers expect to return to their old job, while that number is usually only 40%. That gives me more encouragement that we could quickly bounce back.

Inflation is DOWN. If this were primarily a supply shock, we would be seeing overall higher prices. That means the drop in aggregate demand is bigger than the supply shock. To a Keynesian or a Monetarist, that also means that all the fiscal and monetary stimulus we have done so far is not enough and more needs to be done. To a Classical economist, the thing that needs to be fixed is still supply – demand itself is not terribly important. A Hayekian, of course, thinks all this stimulus is making things worse – it messes with the price signals markets rely on.

To get a rough estimate of where inflation is going, I have been recommending comparing TIPS bonds to nominal bonds because the difference between the interest rate on those bonds (the TIPS spread) is the market’s best guess of inflation. As you can see here the TIPS spread fell from 1.8% in 2019 to 0.5% at the end of March. During April, it has recovered slightly to 1-1.2%. A very rough guesstimate based on that suggests we would need a stimulus 3x as large as we have done right now to return inflation expectations to normal. !!!

The very idea of having Congress spend an extra $5 trillion on top of what is already being done is more than my little fiscally-conservative heart can comprehend just now. Politically, though, I expect Congress will find it in their hearts/re-election campaigns to have another round of stimulus. The Federal Reserve has even called on Congress to spend more, so have no fears of monetary offset hampering anything. Here is a monetarist arguing the Fed needs to do a great deal more to ensure spending expectations don’t fall. One of the points, though, is that we should not expect hyperinflation is around the corner.

On that first day, most students suggested looking at the stock market. From Feb 21-Mar 23 the Dow lost 10,000 points – 1/3 of its value. Since then it has recovered more than half. Notice that the drop came BEFORE quarantine and that the stock market has been recovering even as unemployment has climbed to record heights. This is another reason I don’t recommend imagining that the stock market gives a clear and unbiased view of what’s going on in “the economy”! The situation right now is clearly much worse than it was a month ago, so trying to figure out current conditions would not make sense. If I wanted to give it the best spin possible, I’d say the stock market is predicting better times ahead despite how bad things currently are.

A few other data points:

- 59% of Americans say they can social distance as long as needed, which is up from a few weeks ago. (Gallup)

- Western European countries started reopening earlier than we have and they are starting to see an increase in cases and deaths again. Now, so far that’s only a 3-day trend and it could just be a blip, but it’s not encouraging.

- Most people are actually behaving like decent, responsible people during the crisis – and they usually do.