John Ioannidis, a professor of medicine at Stanford University, fears that the draconian measures to enforce social distancing across Europe and United States could end up causing more harm than the pandemic itself. He believes that governments are acting on exaggerated claims and incomplete data and that a priority must be getting a more representative sample of populations currently suffering corona infections. I agree additional data would be enormously valuable but, following Saloni Dattani, I think we have more warrant for strong measures than Ioannidis implies.

Like Ioannidis’ Stanford colleague Richard Epstein, I agree that estimates of a relatively small overall fatality rate are plausible projections for most of the developed world and especially the United States. Unlike Epstein, I think those estimates are conditional on the radical social distancing (and self-isolation) measures that are currently being pushed rather than something that can be assumed. I am not in a position to challenge Ioannidis’ understanding of epidemiology. Others have used his piece as an opportunity to test and defend the assumptions of the worst-case scenarios.

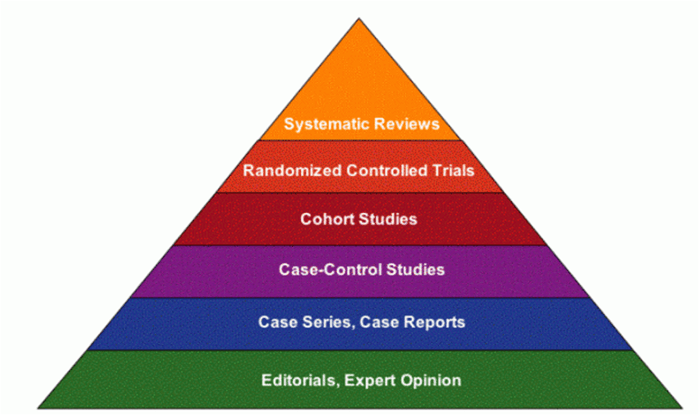

Nevertheless, I can highlight the epistemic assumptions underlying Ioannidis’ pessimism about social distancing interventions. Ioannidis is a famous proponent (occasionally critic) of Evidence-based Medicine (EBM). Although open to refinement, at its core EBM argues that strict experimental methods (especially randomized controlled trials) and systematic reviews of published experimental studies with sound protocols are required to provide firm evidence for the success of a medical intervention.

The EBM movement was born out of a deep concern of its founder, Archie Cochrane, that clinicians wasted scarce resources on treatments that were often actively harmful for patients. Cochrane was particularly concerned that doctors could be dazzled or manipulated into using a treatment based on some theorized mechanism that had not been subject to rigorous testing. Only randomized controlled trials supposedly prove that an intervention works because only they minimize the possibility of a biased result (where characteristics of a patient or treatment path other than the intervention itself have influenced the result).

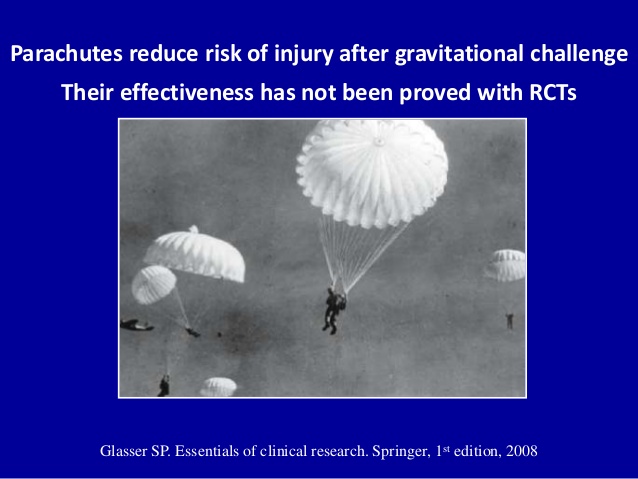

So when Ioannidis looks for evidence that social distancing interventions work, he reaches for a Cochrane Review that emphasizes experimental studies over other research designs. As is often the case for a Cochrane review, many of the results point to uncertainty or relatively small effects from the existing literature. But is this because social distancing doesn’t work, or because RCTs are bad at measuring their effectiveness under pandemic circumstances (the circumstances where they might actually count)? The classic rejoinder to EBM proponents is that we know that parachutes can save lives but we can never subject them to RCT. Effective pandemic interventions could suffer similar problems.

Nancy Cartwright and I have argued that there are flaws in the methodology underlying EBM. A positive result for treatment against control in a randomized controlled trial shows you that an intervention worked in one place, at one time for one set of patients but not why and whether to expect it to work again in a different context. EBM proponents try to solve this problem by synthesizing the results of RCTs from many different contexts, often to derive some average effect size that makes a treatment expected to work overall or typically. The problem is that, without background knowledge of what determined the effect of an intervention, there is little warrant to be confident that this average effect will apply in new circumstances. Without understanding the mechanism of action, or what we call a theory of change, such inferences rely purely on induction.

The opposite problem is also present. An intervention that works for some specific people or in some specific circumstances might look unpromising when it is tested in a variety of cases where it does not work. It might not work ‘on average’. But that does not mean it is ineffective when the mechanism is fit to solve a particular problem such as a pandemic situation. Insistence on a narrow notion of evidence will mean missing these interventions in favor of ones that work marginally in a broad range of cases where the answer is not as important or relevant.

Thus even high-quality experimental evidence needs to be combined with strong background scientific and social scientific knowledge established using a variety of research approaches. Sometimes an RCT is useful to clinch the case for a particular intervention. But sometimes, other sources of information (especially when time is of the essence), can make the case more strongly than a putative RCT can.

In the case of pandemics, there are several reasons to hold back from making RCTs (and study designs that try to imitate them) decisive or required for testing social policy:

- There is no clear boundary between treatment and control groups since, by definition, an infectious disease can spread between and influence groups unless they are artificially segregated (rendering the experiment less useful for making broader inferences).

- The outcome of interest is not for an individual patient but the communal spread of a disease that is fatal to some. The worst-case outcome is not one death, but potentially very many deaths caused by the chain of infection. A marginal intervention at the individual level might be dramatically effective in terms of community outcomes.

- At least some people will behave differently, and be more willing to alter their conduct, during a widely publicized pandemic compared to hygienic interventions during ordinary times. Although this principle might be testable in different circumstances, the actual intervention won’t be known until it is tried in the reality of pandemic.

This means that rather than narrowly focusing on evidence from EBM and behavioral psychologists (or ‘nudge’), policymakers responding to pandemics must look to insights from political economy and social psychology, especially how to shift norms towards greater hygiene and social distancing. Without any bright ideas, traditional public health methods of clear guidance and occasionally enforced sanctions are having some effect.

What evidence do we have at the moment? Right now, there is an increasing body of defeasible knowledge of the mechanisms with which the Coronavirus spreads. Our knowledge of existing viruses with comparable characteristics indicates that effectively implemented social distancing is expected to slow its spread and that things like face masks might slow the spread when physical distancing isn’t possible.

We also have some country and city-level policy studies. We saw an exponential growth of cases in China before extreme measures brought the virus under control. We saw immediate quarantine and contact tracing of cases in Singapore and South Korea that was effective without further draconian measures but required excellent public health infrastructure.

We have now also seen what looks like exponential growth in Italy, followed by a lockdown that appears to have slowed the growth of cases though not yet deaths. Some commentators do not believe that Italy is a relevant case for forecasting other countries. Was exponential growth a normal feature of the virus, or something specific to Italy and its aging population that might not be repeated in other parts of Europe? This seems like an odd claim at this stage given China’s similar experience. The nature of case studies is that we do not know with certainty what all the factors are while they are in progress. We are about to learn more as some countries have chosen a more relaxed policy.

Is there an ‘evidence-based’ approach to fighting the Coronavirus? As it is so new: no. This means policymakers must rely on epistemic practices that are more defeasible than the scientific evidence that we are used to hearing. But that does not mean a default to light-touch intervention is prudent during a pandemic response. Instead, the approaches that use models with reasonable assumptions based on evidence from unfolding case-studies are the best we can do. Right now, I think, given my moral commitments, this suggests policymakers should err on the side of caution, physical distancing, and isolation while medical treatments are tested.

[slightly edited to distinguish my personal position from my epistemic standpoint]